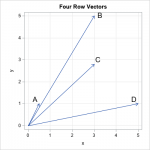

Here is how you convert from cosine similarity $s$ ($-1$ to $1$) to Euclidean distance $d$ ($0$ to $2$):īecause the transformation between these two measures is monotonic they will both give the same ordering when used to rank. Here is how you convert from Euclidean distance $d$ ($0$ to $2$) to cosine similarity $s$ ($-1$ to $1$): In this simple example, the cosine of the angle between the two vectors, cos(), is our measure of the similarity between the two documents. The Euclidean distance is simply the distance between two vectors $x_1$ and $x_2$ in some $k$-dimensional hyperspace. Hopefully it’ll rank on Google so others searching for it can find a useful resource. It’s intuitive if you are comfortable with manipulating vectors and can visualize what cosine similarity and Euclidean distance means for unit-length normalized vectors.Įvery now and then I am asked about this and I suprisingly am never able to point someone, who might not understand this property, to a web page describing it as Google gives no good search results for an explainer.īelow, I’ll describe what the relationship is and how to convert from Euclidean distance to cosine similarity (and vice versa). Mathematically the cosine similarity is expressed by the following formula: cosine(a,b) m i1 aibi m i1 aim i1bi c o s i n e ( a, b) i 1 m a i b i i 1 m a i i 1 m b i. My own package Magnitude uses it as well when ranking results retrieved with k-NN. This a pretty simple and well-known property that is utilized in many machine learning packages that utilize embeddings, which are just vectors. Cosine similarity measures the similarity between two vectors by calculating the cosine of the angle between the two vectors. In fact, you can directly convert between the two. This means for two overlapping vectors, the value of cosine will be maximum and minimum for two precisely opposite vectors. If you consider the cosine function, its value at 0 degrees is 1 and -1 at 180 degrees. The dimensions of the vectors dont correspond to word-counts, they are just some arbitrary latent concepts that admit values in -inf to inf.For unit-length vectors, both the cosine similarity and Euclidean distance measures can be used for ranking with the same order. The cosine similarity measures the similarity between vector lists by calculating the cosine angle between the two vector lists. It may have nudged some vectors well into the negative-values. The end result might leave you with some dimension-values being negative and some pairs having negative cosine similarity - simply because the optimization process did not care about this criterion. You run this optimization for enough iterations and at the end, you have word-embeddings with the sole criterion that similar words have closer vectors and dissimilar vectors are farther apart. Next run an optimizer that tries to nudge two similar-vectors v1 and v2 close to each other or drive two dissimilar vectors v3 and v4 further apart (as per some distance, say cosine). Its right that cosine-similarity between frequency vectors cannot be negative as word-counts cannot be negative, but with word-embeddings (such as glove) you can have negative values.Ī simplified view of Word-embedding construction is as follows: You assign each word to a random vector in R^d. Or should I just look at the absolute value of minimal angle difference from $n\pi$? Absolute value of the scores? But I really have a hard time understanding and interpreting this negative cosine similarity.įor example, if I have a pair of words giving similarity of -0.1, are they less similar than another pair whose similarity is 0.05? How about comparing similarity of -0.9 to 0.8? I know for a fact that dot product and cosine function can be positive or negative, depending on the angle between vector. import pandas as pd from scipy import spatial df pd.DataFrame ( X,Y,Z).T similarities df.values.

I am used to the concept of cosine similarity of frequency vectors, whose values are bounded in. To set the standard deviation to 1, we divide every value in the column by the standard deviation. If you have aspirations of becoming a data scie. That explained why I saw negative cosine similarities. Cosine similarity, cosine distance explained in a way that high school student can also understand it easily. Apparently, the values in the word vectors were allowed to be negative. That immediately prompted me to look at the word-vector data file. However, I noticed that my similarity results showed some negative numbers. I was trying to use the GLOVE model pre-trained by Stanford NLP group ( link).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed